Why Small Language Models Beat Large Models For Enterprise AI

Everyone rushed to plug powerful LLMs into their business.

Then the first cloud bill arrived… and the security team joined the meeting.

The reality: most enterprises don’t need the biggest model, they need the right‑sized one… something reliable, affordable, and easy to control. That’s where Small Language Models (SLMs) quietly win over Large Language Models (LLMs) for serious enterprise work.

At Engenies, we see a simple pattern:

LLMs are great for exploration. SLMs are great for execution.

What are LLMs and SLMs… in human language?

Let’s define the two without any AI jargon.

- LLMs (Large Language Models)

Huge, general‑purpose models with tens or hundreds of billions of parameters, trained on internet‑scale data to talk about almost anything.

Think of them as “encyclopedias that can chat.”

- SLMs (Small Language Models)

Much smaller models… usually millions to a few billion parameters… tuned for a narrower set of tasks, often in a specific industry or domain.

Think of them as “specialists who know your business deeply.”

In enterprises, specialists usually create more value than generic geniuses.

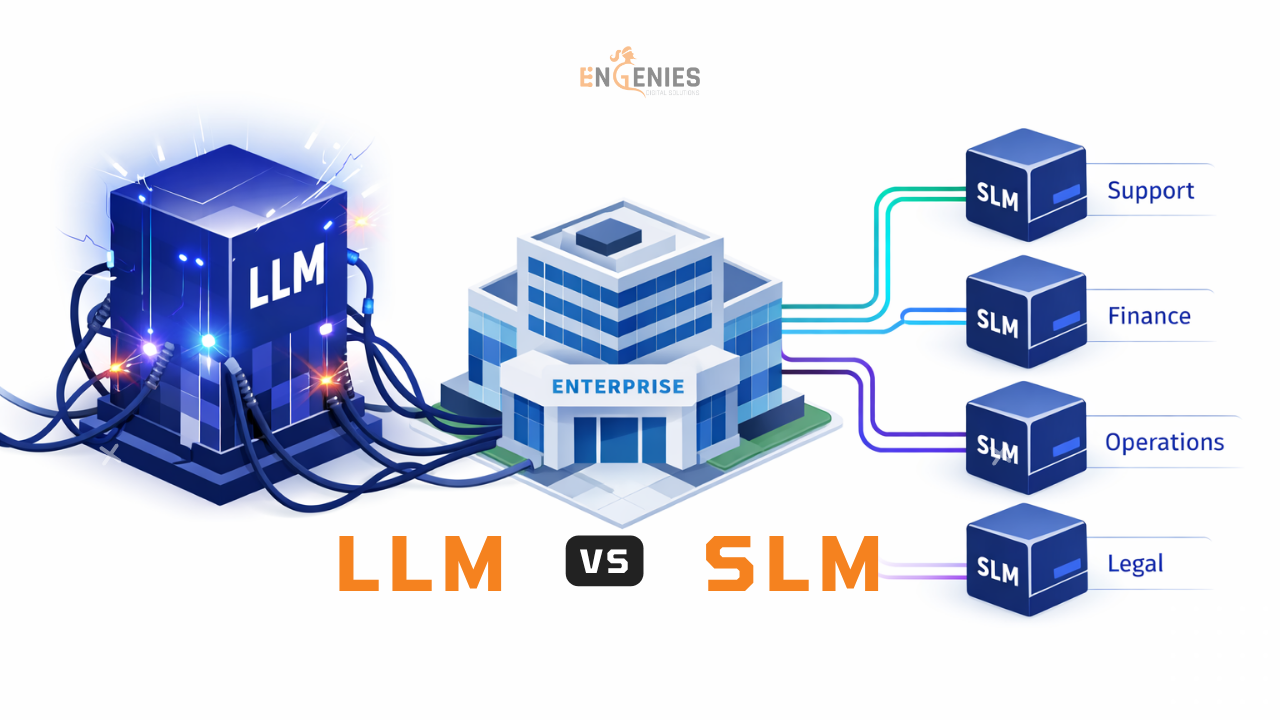

The SLM vs LLM picture at a glance

| Dimension | Large Language Models (LLMs) | Small Language Models (SLMs) |

|---|---|---|

| Typical size | 30B–175B+ parameters | Few million to ~1–8B parameters |

| Knowledge scope | Broad, general knowledge across many domains | Narrow, domain‑specific knowledge |

| Infra & cost | Needs expensive GPUs, high infra, high inference cost | Runs on commodity hardware, much cheaper to serve |

| Latency | 300–2000 ms typical cloud latency | Often 10–50 ms on edge / on‑prem |

| Privacy & control | Often SaaS/API, data leaves your perimeter | Can run fully on‑prem or VPC, strong control |

| Customization | Fine‑tuning is powerful but costly and slow | Easier, faster, cheaper to fine‑tune for domains |

Now let’s get practical: why should an enterprise choose an SLM first, not an LLM, for most workloads?

1. The money question: cost and scalability

Sooner or later, someone asks: “This AI thing is cool, but why is our infra bill exploding?”

- Training and running LLMs at scale needs expensive GPU infra, high energy, and serious engineering effort.

- Industry estimates put training a frontier‑scale LLM in the million‑dollar range per run and with huge energy consumption.

- Even if you’re “just using an API,” large‑volume usage gets expensive quickly.

SLMs flip the equation:

- They have far fewer parameters and lower compute needs, so they can run on regular servers, even edge devices.

- Businesses report 50–90%+ cost reductions when switching targeted workloads from LLMs to well‑tuned SLMs.

Real‑life style example

Imagine a support operation answering 1 million tickets a month:

- LLM approach: great answers, but per‑request cost makes finance very nervous.

- SLM approach: a smaller, domain‑tuned model that handles 80–90% of tickets accurately at a fraction of the per‑ticket cost.

At scale, “small” saves millions.

2. Domain accuracy: knowing your world, not the whole internet

Most enterprise problems are not “general knowledge” problems. They’re very specific:

- Diagnosing a particular machine fault code.

- Interpreting clauses in your contract templates.

- Handling claims under your policy rules.

Here’s the catch:

- LLMs are trained for breadth. Great for general Q&A, weaker on narrow, highly specific language unless heavily customized.

- SLMs are trained or fine‑tuned on focused, high‑quality domain data, so they speak your domain’s language fluently.

Studies and benchmarks show:

- Fine‑tuned SLMs outperform GPT‑4 on 80–85% of classification and extraction tasks when trained on domain data.

- In many enterprise‑type tasks (classification, routing, extraction), SLMs achieve up to 80% of LLM performance with ~10% of the parameters… at a far lower cost.

In plain words: for most day‑to‑day enterprise work, a focused SLM is more accurate and predictable than a giant generalist.

3. Privacy, security, and compliance: sleep‑better‑at‑night factor

Most enterprise problems are not “general knowledge” problems. They’re very specific:

- Diagnosing a particular machine fault code.

- Interpreting clauses in your contract templates.

- Handling claims under your policy rules.

Here’s the catch:

- LLMs are trained for breadth. Great for general Q&A, weaker on narrow, highly specific language unless heavily customized.

- SLMs are trained or fine‑tuned on focused, high‑quality domain data, so they speak your domain’s language fluently.

Studies and benchmarks show:

- Fine‑tuned SLMs outperform GPT‑4 on 80–85% of classification and extraction tasks when trained on domain data.

- In many enterprise‑type tasks (classification, routing, extraction), SLMs achieve up to 80% of LLM performance with ~10% of the parameters… at a far lower cost.

In plain words: for most day‑to‑day enterprise work, a focused SLM is more accurate and predictable than a giant generalist.

4. Governance and auditability: from “magic” to “manageable”

Enterprises don’t just want “smart” systems… they want governable systems.

SLMs are easier to govern because:

- Their scope is narrower, so you can define clearer guardrails, allowed behaviors, and abstention rules.

- They integrate well into standard MLOps stacks: registries, versioning, A/B testing, rollbacks, telemetry, and continuous evaluation.

This makes it easier to answer questions like:

- “What changed between model v3 and v4?”

- “Why did the model give this answer?”

- “How do we roll back if something goes wrong?”

With LLMs as external black boxes, these are much harder questions.

5. Latency and UX: speed is a feature

Users hate waiting. Whether it’s an internal tool or a customer‑facing assistant, speed matters.

- Cloud LLM calls often take 300–2000 ms for the first token.

- Edge‑deployed SLMs can respond in 10–50 ms.

That gap is the difference between:

- “This feels instant” vs “This tool is slow.”

- “Let’s fully automate this flow” vs “We still need humans in the loop because the bot is sluggish.”

The smaller footprint of SLMs also makes them ideal for:

- Running in stores, factories, warehouses, and field‑service devices where connectivity is patchy.

- Mobile and embedded experiences where you simply can’t host a giant model.

6. Customization and iteration speed

Trying to fine‑tune a giant LLM for every niche use case is like using a rocket to drive to the grocery store.

SLMs make experimentation practical:

- They’re cheaper and faster to fine‑tune on your own data.

- Techniques like knowledge distillation, pruning, and quantization compress larger “teacher” models into efficient SLM “students” without losing key capabilities.

This means AI teams can:

- Ship more domain‑specific models.

- Iterate faster.

- Maintain multiple specialized SLMs for different workflows or business units.

In other words… your AI can evolve at business speed, not research‑lab speed.

7. When should you still use an LLM?

Despite all this, LLMs are not obsolete. They’re just not the default answer to every problem.

LLMs make sense when you need:

- Broad, cross‑domain reasoning and open‑ended exploration.

- Creative generation and synthesis across disparate knowledge sources.

- Low‑volume, high‑value tasks where per‑call cost is less important.

Most mature enterprises end up with a hybrid architecture:

- SLMs handle the high‑volume, well‑defined, domain‑specific work that powers core operations.

- LLMs are reserved for the minority of tasks that truly need open‑ended, cross‑domain intelligence.

The real question is not “SLM or LLM?”

It’s: “Which is the smallest, safest model that meets this task’s requirements?”

How to build a domain‑specific SLM (The Engenies blueprint)

Here’s a simple, pragmatic framework we use at Engenies to help enterprises go from “idea” to “production‑grade SLM.”

How Engenies can help you think smaller (in a good way)

At Engenies, our stance is simple:

Bigger models don’t guarantee bigger outcomes. Better fit does.

We typically help enterprises in three ways:

- Strategic model selection

- Map your use cases to the right model type (SLM, LLM, or hybrid)

- Quantify trade‑offs: cost per task, latency, accuracy, and compliance risk

- Design and build domain‑specific SLMs

- Curate and structure your domain data

- Select and adapt base SLMs

- Implement guardrails, retrieval, and evaluation pipelines tailored to your workflows

- Production‑grade deployment and governance

- Deploy SLMs on‑prem or in your VPC

- Set up monitoring, versioning, and change management so AI becomes a safe, repeatable capability… not a one‑off experiment

ready to right‑size your AI?

If you’re:

- Struggling with LLM costs or latency.

- Nervous about sending sensitive data to external APIs.

- Seeing “demo magic” but not production ROI.

…then it’s time to look seriously at SLMs.

Here’s what you can do next with Engenies:

Think of it this way:

If AI is going to touch every workflow in your enterprise, you don’t want a few giant models you can barely afford to run.

You want many small, specialized models that quietly power the business… fast, safe, and at a cost your CFO can live with.

Engenies is here to help you build exactly that. Write us your thoughts: wish@engenies.com